This post in a continuation of the first look report on the recently released Sentinel-3 level 1 data from the OLCI and SLSTR instruments. The first part provided general context and discussion of the general data distribution form, this second part will look in more detail into the data itself.

The product data grid

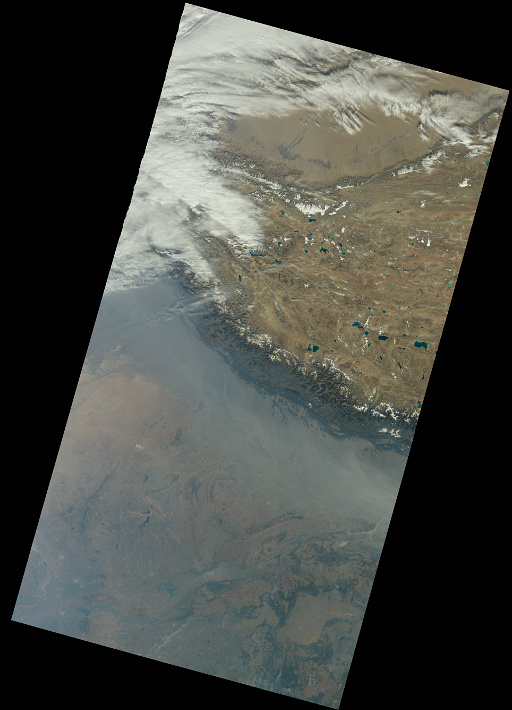

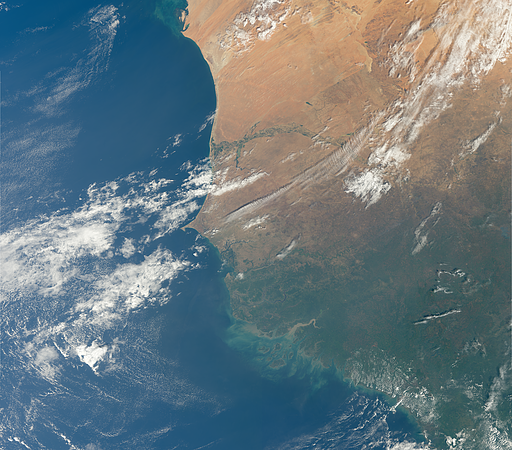

I mentioned at the end of the first part that the form the imagery is distributed in is fairly strange. I will here try to explain in more detail what i mean. Here is how the OLCI data looks like for the visual range spectral bands. I combined the single package data shown previously with the next ‘scene’ generating a somewhat longer strip:

Sentinel-3 OLCI two image strip in product grid

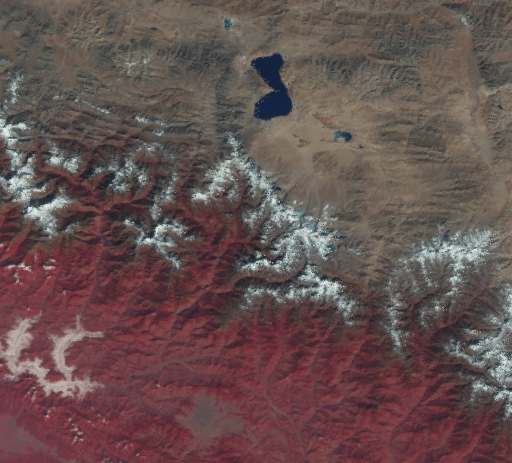

What is strange about this is that this is not actually the view recorded by the satellite. For comparison here a MODIS image – same day, same area but due to the different orbits slightly further east. This is from the MOD02 processing level, that means radiomentrically calibrated radiances but without any geometric processing, i.e. this is what the satellite actually sees.

You can observe the distortion at the edges of the swath – kind of like a fisheye photograph. This results primarily from the earth curvature, remember, these are wide angle cameras, in case of MODIS this covers a field of view of more than 90 degrees. With OLCI you would expect something similar, somewhat less distortion due to the more narrow field of view and asymmetric due to the tilted view. We don’t get this because the data is already re-sampled to a different coordinate system. That coordinate system is still a view oriented system that maps the image samples onto an ellipsoid based on the position of the satellite. In the ESA documentation this is called product grid or quasi-cartesian coordinate system.

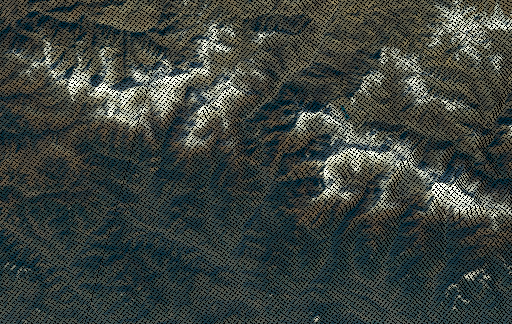

This re-sampling is done without mixing samples just by moving and duplicating them. For OLCI this looks like this near the western edge of the recording swath:

Sentinel-3 OLCI pixel duplication near swath edge

For SLSTR things are more complicated due to a more complex scan geometry. Here this also results in samples being dropped (so called orphan pixels) when several original samples would occupy the same pixel in the product grid.

I have been trying to find an explanation on why this is done in the documentation and also thought about possible reasons. The only advantage you really have is that images in this grid are free of large scale distortion which is of course an advantage when you work with the data in that coordinate system. But normally you will use a different, standardized coordinate system that is not tied to the specific satellite orbit and when doing that this re-sampling is at best a minor nuisance, at worst the source of serious problems.

Getting the data out

So what do you need to get the data out of this strange view specific product grid? For this you need the supplementary data, in particular geo_coordinates.nc for OLCI and geodetic_*.nc for SLSTR. These files contain what is called geolocation arrays in GDAL terminology. You can use them to reproject the data into a standard coordinate system of your choosing. However the approach GDAL takes for geolocation array based reprojection is based on the assumption that there is a continuous mapping between the grids. This is not the case here and this leads to some artefacts and suboptimal results. There is a distinct lack of good open source solutions for this particular task despite this being a fairly common requirement so i have no real recommendation here.

What you get when you do this is something like the following. This is in UTM projection.

Sentinel-3 OLCI image reprojected to UTM

To help understanding what is going on here i also produced a version with only those pixels in the final grid with a source data sample within them being colored. Here two crops from this from near the nadir point and towards the western edge of the swath:

Point by point reprojection near nadir

Point by point reprojection near swath edge

This shows how sample density and therefore resolution differs between different parts of the image and how topography affects the sample distribution. You can also see that the image is combined from the views of several cameras and the edge interrupts the sample pattern a bit. In terms of color values these edges between the image strips are usually not visible by the way, you can sometimes see them on very uniform surfaces or in form of discontinuities in clouds.

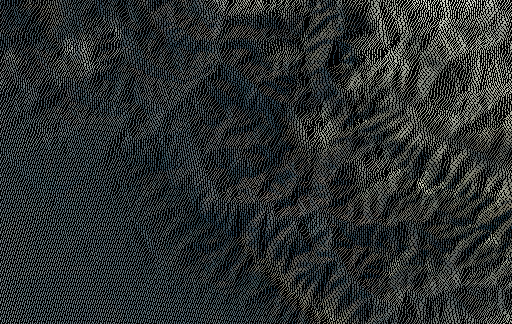

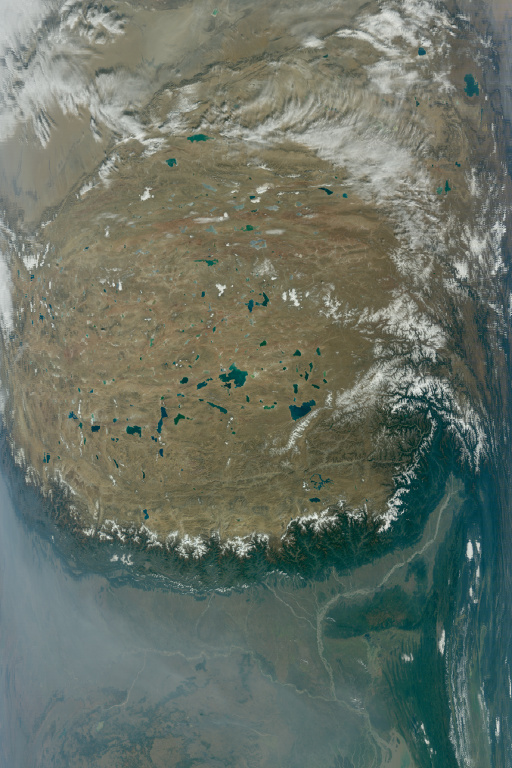

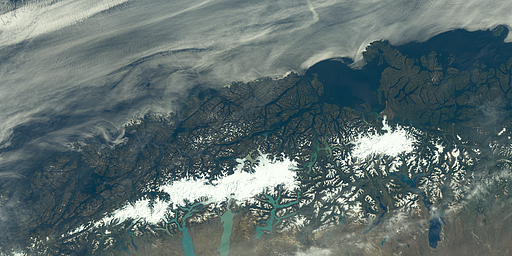

Apart from longitude and latitude grids the files also contain an altitude grid. One can assume this is based on the same elevation data that is used to transform the view based product grid into the earth centered geographic coordinates. So lets have a look at this. For the Himalaya-Tibet area this looks like SRTM with fairly poor quality void fills – pretty boring, but all right.

Altitude grid in the Himalaya region

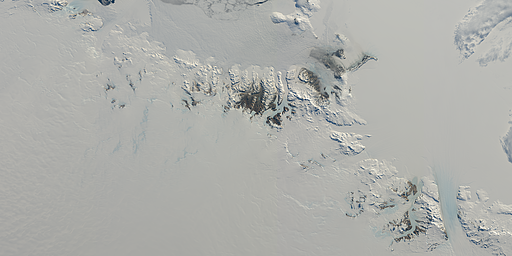

But in polar regions this is more interesting.

Altitude grid at the Antarctic peninsula

Altitude grid in Novaya Semlya

Not much more to say about this except: Come on, seriously?

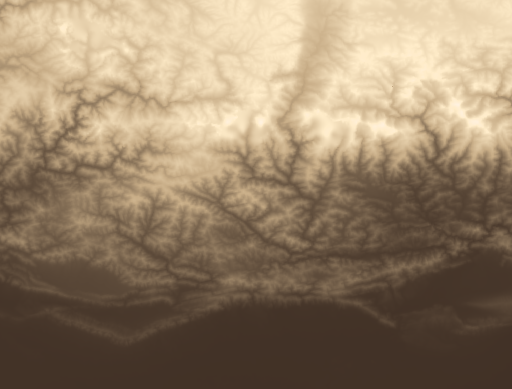

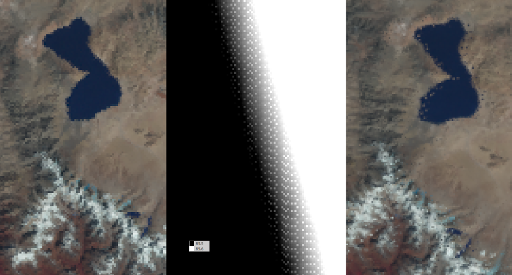

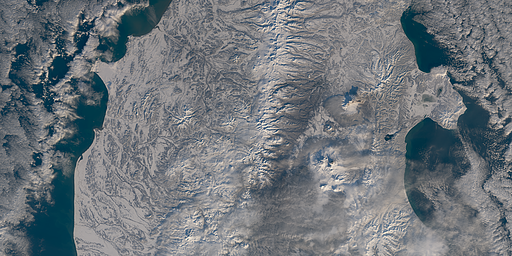

So far we looked at the OLCI data. The SLSTR images are quite similar but there are of course separate geolocation arrays for the nadir and oblique images. However it looks like the geolocation data here is faulty. What you get when you reproject the SLSTR data based on the geodetic_*.nc is something like this:

Coordinate grid errors leading to incorrect resampling

The problem seems to manifest primarily in high relief areas so it is likely users only interested in ocean data don’t see it. There is also a separate low resolution ‘tie point’ based georeferencing data set and it is possible that this does not exhibit the same problem. I would also be happy to be proven wrong here of course – although this seems unlikely.

Original product grid (left), longitude component of the coordinate grid (center) and reprojected image (right)

The observed effect is much stronger in the oblique view imagery by the way.

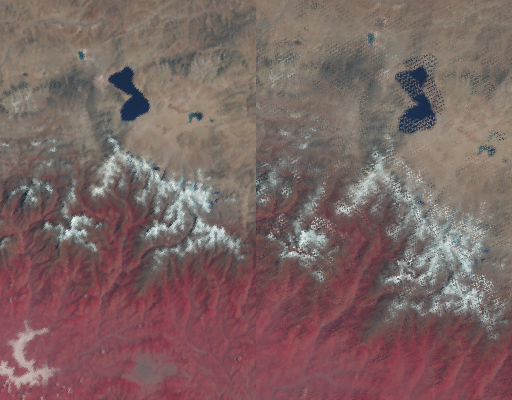

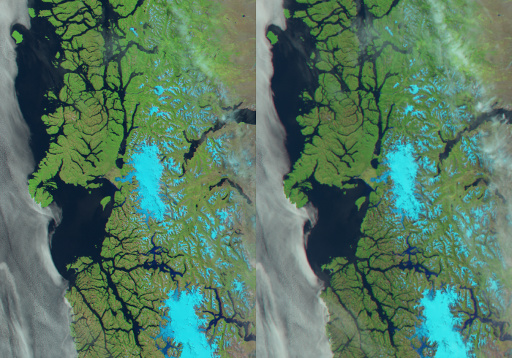

SLSTR geolocation error in oblique view (left: product grid, right: reprojected)

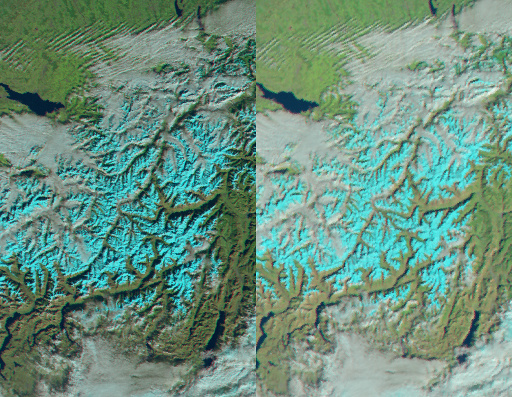

This overall severely limits evaluation of the SLSTR data. What can be said so far is that otherwise the data itself looks pretty decent. There seems to be a smaller misalignment (a few hundred meters) between the VIS/NIR and the SWIR data as visible in the following examples in form of color fringes. It exists in both the nadir and the oblique view data sets. All of the SLSTR images in the product grid look kind of jaggy due to the way they are produced by moving and duplicating pixels, combined with the curved scan geometry of the sensor.

Sentinel-3 SLSTR example in false color infrared (left: nadir, right: oblique), click for full resolution

The supplemental oblique view image is an interesting feature. Its primary purpose is most likely to facilitate better atmosphere characterization by comparing two views recorded with different paths through the atmosphere. Another reason is that just like with the laterally tilted view you avoid specular reflection of the sun itself. You might observe when comparing the two image that the oblique view is usually brighter. This has several reasons:

- The path through the atmosphere is longer so more light is scattered.

- Since it is a rear view it is recorded slightly later with a slighly higher sun position.

- Since the rear view is pointed northwards on the day side it is looking at the sun lit flanks of mountains on the northern hemisphere – on the southern hemisphere it’s the other way round.

Other possible applications of the oblique view could be cloud detection and BRDF characterization of the surface. Note since the earth surface is much further away from the satellite in this configuration resolution of the oblique images in overall significantly lower.

So far we had an overall look at the data and how it is structured, the main characteristics and how it can be used. In the third part of this review i will make some comparisons with other image sources. Due to the mentioned issues with the SLSTR geolocation grids this will likely focus on the OLCI data only.

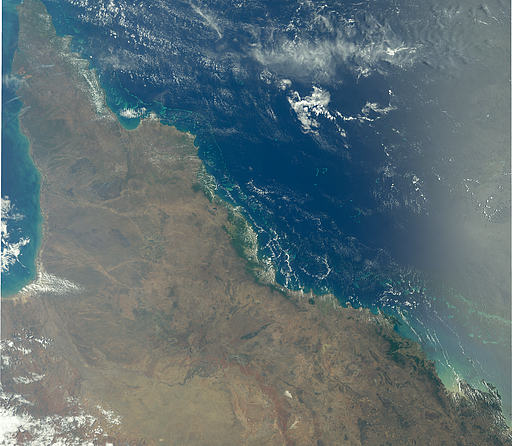

Here a few more sample images. The first one nicely illustrating the asymmetric view with sun glint on the far right near the maximum in the southern hemisphere summer in northeast Australia.

And here some more samples of various areas from around the world.

March 28, 2018 at 22:30

Hello, great article thank you! I am wondering, how did you make the point-by-point reprojection?

April 11, 2018 at 13:09

Forward reprojection is an own development but GDAL also does this (though it does not deal so well with discontinuities in the transformation).

April 2, 2019 at 09:53

Hi Chris,

I have recently started working in the area of EO. I am finding your posts very informative. Thank you for writing them.

I came across this post (and the one before) after trying to find a way to reproject SLSTR data in Python. As you mentioned in your reply to Jeff, this should be possible with GDAL.

Although I don’t doubt what you have written above, I am surprised (and disappointed) that repojection is so complicated for SLSTR images, especially since ESA’s SNAP program seems to manage it OK. Perhaps I am missing something with the quality of the reprojection in SNAP.

Given my relative inexperience in this area, do you have any advice or tips for reprojection of SLSTR images with GDAL?

April 2, 2019 at 11:49

Hello David,

i don’t know how SNAP performs reprojection – they probably either use the tie point grid or they use some undocumented knowledge about the data structure that allows them to avoid the geolocation array errors discussed.

Forward reprojection in coordinate systems with discontinuities – and this is what you have when you use the geolocation arrays – is a tough subject and how to best approach this depends significantly on what your goal is ultimately for the results, and how much computing resources you are willing to invest. I have never used GDAL on the SLSTR data but i suspect in high relief areas where the discussed error manifests it will indeed look crappy. That is however of course not GDAL’s fault.

April 2, 2019 at 12:53

Hi Chris,

Thanks for your reply. Fortunately, I am mainly looking at sea scenes, with a few dotted landmasses with low(ish) relief, so I shouldn’t be addressing the most difficult possible situation.

I am going to try a few things out, including a different python library called SatPy. If I come up with solution that might be helpful for your readers I would happily post a code snippet here.

February 23, 2022 at 11:31

Hi David,

Did you ever happen to come up with a solution using SatPy or something else for working with SLSTR data using python?